2026

April

26th April 2026

Happy Sunday! 👋

First up some interesting news and observations...

OZU has released CinemaCLIP into the world!

OZU builds video products to understand and explore stories at scale.

We're a remote-first startup based in Brooklyn NY and backed by angels, VCs, and entrepreneurs from Figma, Atlassian, Runway, NVIDIA, and Maximum Effort.

Introducing CinemaCLIP - A hybrid CLIP model and taxonomy for the visual language of cinema

CinemaCLIP is one part of a family of models and techniques that we built at OZU to understand the art of visual storytelling, and is the backbone for a suite of tools and processes that power OZU's state of the art narrative understanding systems.

It offers a demonstrable improvement over CLIP and VLM models for many industry professional use cases. Not only is it more accurate in understanding cinematic concepts, it can also be run on commodity hardware on edge, at greatly faster than real-time rates. This enables opportunities like real-time video search and retrieval systems, live camera assist systems, on set validation, and more.

Our key contributions besides the model itself are two-fold: a dataset and ontology of visual language at the frame level, and a novel training recipe to add domain expertise to CLIP models while mitigating catastrophic forgetting. We believe this recipe is generic and can be adapted to other domains as well.

You can read more about CinemaCLIP in a detailed article on their website.

You can download it on GitHub and HuggingFace.

It'll be super interesting to see what people build with it!

Shade Raises $14M to Build The Intelligent File System for Creative Teams. 🤯

We're excited to announce that we just raised a $14M fundraising round led by Khosla Ventures, with Construct Capital and Bling Capital. That brings Shade to $20M in total funding. This funding lets us keep building Shade as that system of record — one place for creative teams to ingest, search, review, archive, and automate, without shuttling files between six or seven tools.

This kind of investment just blows my mind. The amount of insanely awesome stuff I could build in the FCP world if I had a million dollars, let alone $14 million.

I've played around with Shade quite a bit over the years, and have seen it grow and evolve.

I've chatted with CEO Brandon Fan over Zoom.

Whilst I'm not a subscriber of Shade currently (we use a mixture of Synology Drive and Frame.io), it's certainly great to see good companies and great people kicking goals!

In other news... Apple is hiring! 🥳

They're currently trying to fill this role: Senior Software Engineer — Video Applications (FX Plug API’s)

This is great news for Final Cut Pro users, as it means that Apple is actively working on improving FxPlug - the developer technology behind Motion Templates in Final Cut Pro.

The job reads:

Description: As a Senior Software Engineer on the Motion team, you’ll be the bridge between the core engineering team and our 3rd party developer ecosystem. You’ll design and develop new FxPlug APIs that enable developers to create powerful new effects for Final Cut Pro and Motion, as well as maintaining the existing FxPlug APIs. You'll own the developer experience end-to-end—from API design to community engagement. This is a hands-on role for someone who thrives on solving complex problems by creating clear and consistent developer-facing interfaces.

Responsibilities:

- Design and implement robust, well-documented FxPlug APIs that enable 3rd party developers to extend the capabilities of our applications

- Diagnose and debug integration issues with our applications and the FxPlug APIs

- Identify and resolve performance bottlenecks in the implementation of the FxPlug APIs

- Maintain existing FxPlug APIs and their implementation.

- Write automated tests to exercise the FxPlug APIs

- Write clean, testable code

- Participate in code reviews, both giving and receiving feedback

- Create and maintain FxPlug API documentation and sample code

- Provide technical support and guidance to 3rd party developers, including communicating directly with them and helping them with troubleshooting

- Prioritize FxPlug API improvements by balancing 3rd party developer needs as well as Apple’s own roadmap

This is the part that makes me REALLY excited:

Provide technical support and guidance to 3rd party developers, including communicating directly with them and helping them with troubleshooting

As I've explained in the past, Blackmagic is the leader in 3rd party developer support.

They have a great public forum, excellent developer support and a big developer presence at trade shows like NAB.

HOPEFULLY, this job ad points to Apple offering better developer support in the future. We'll see.

In the world of vibe coding, every day there seems to be a new Final Cut Pro on the market.

Today I have two super interesting applications to share from two very interesting people...

Ulti.Media has released Media Matcher.

Media Matcher is a sophisticated macOS application designed to solve the critical problem of media disconnection in professional post-production. It leverages advanced mathematics and high-performance engineering to reconnect "To Match" media with original sources, regardless of filename changes.

You can watch a simple demo video on YouTube:

You can download and learn more on the Ulti.Media website.

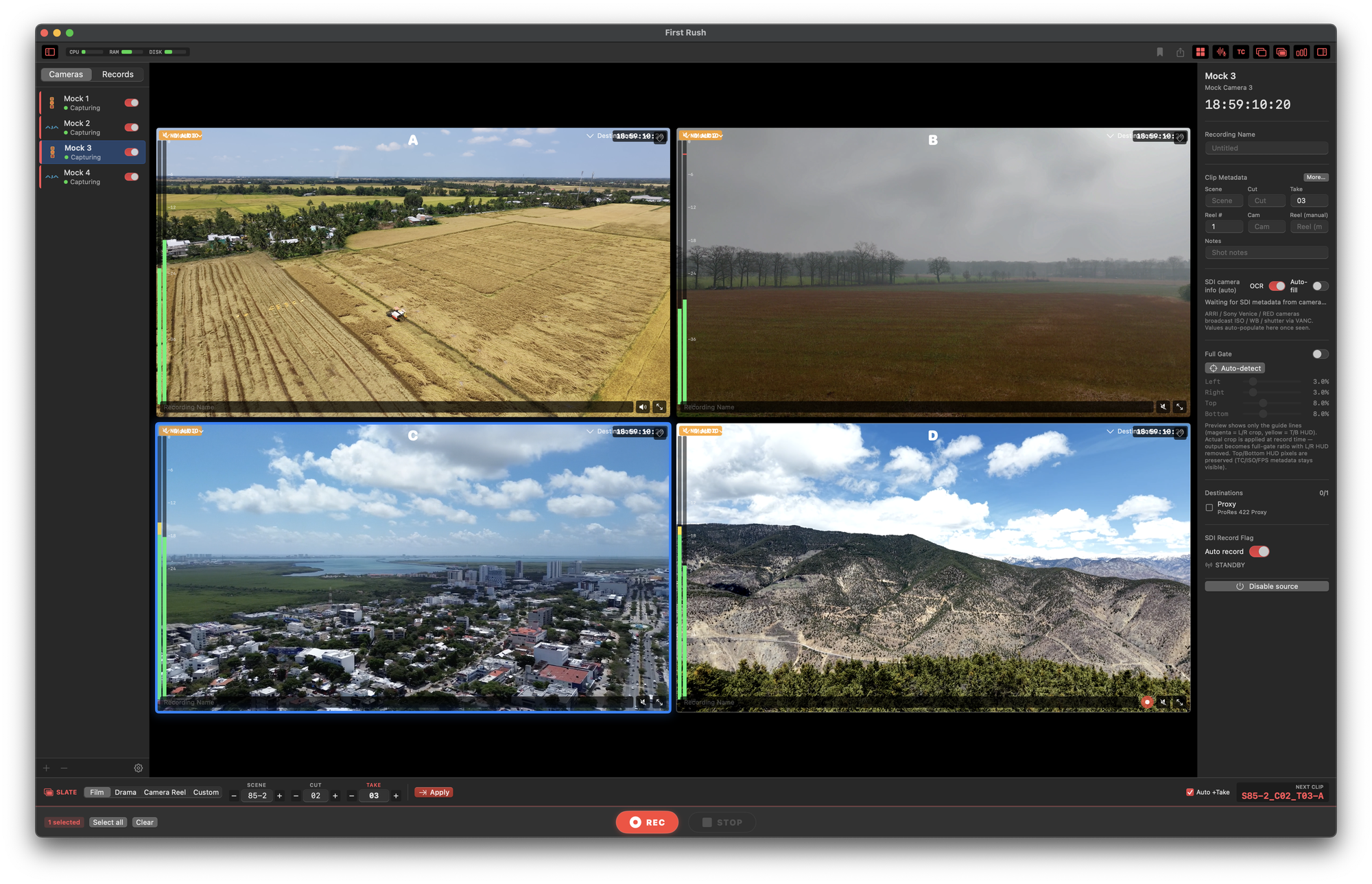

Jinkyu Han, an on-set film editor working in Seoul, Korea has also released First Rush.

Jinkyu explains:

Long-time admirer of CommandPost — it's been quietly running on a lot of FCP rigs over here too — and a regular reader of fcp.cafe.

I just shipped 1.0 of something I built for myself called First Rush — a native macOS multi-cam SDI recorder designed around the Final Cut Pro pipeline. It came out of the same kind of frustration that produced CommandPost (workflow automation that nobody else was going to build), and I'd love to know if it might be a fit for a piece on fcp.cafe — or even a quick mention in the news feed.

What it is: A Movie Recorder alternative born from real on-set frustration. Korean drama productions stack ARRI ALEXA / Sony Venice / DJI Ronin 4D in the same shoot, and getting clean FCP-ready clips out of that mix — synchronized, with metadata that flows into Final Cut without any post-import cleanup — was always the bottleneck.

Highlights for fcp.cafe readers: Master camera REC trigger — detects the SMPTE RP 188 RECORD flag from ARRI ALEXA / Sony Venice / DJI Ronin 4D / Blackmagic URSA and synchronizes every video channel automatically.

- HUD OCR — reads Clip · Reel · ISO · WB · shutter directly from the camera HUD via the Vision framework, writes them into clip metadata before recording even starts.

- NLE-ready metadata — Reel · Scene · Take · Camera Name embedded into the .mov userdata atom. Final Cut Pro's Info inspector and DaVinci Resolve's metadata columns recognize them natively. No FCPXML round-trip needed for the basics.

- Multi-cam grid + gang recording — up to 4×4 layouts, ⌘-click to group cameras and start/stop in sync.

- Multi-source bulk configuration — apply Overlay / LUT / destination / auto-recording to multiple cameras in a single operation.

- Portrait 9:16 mode — for Reels / Shorts / TikTok productions where cameras are mounted sideways. Both preview and recorded output rotate.

- 16-channel audio - SDI embedded audio at 24-bit / 48 kHz with cross-source routing (route any camera's audio onto another clip — useful when the wide camera's mic is too far from the talent).

Why it might be interesting for fcp.cafe: The biggest pain point I keep hearing from FCP editors here in Korea is "the metadata never comes through cleanly." First Rush writes exactly what Final Cut Pro reads — Reel · Scene · Take · Camera columns populate themselves — so the editor opens the project and starts cutting, no manual tagging. Tested through real Korean drama productions before 1.0. - Stack: Native Swift / SwiftUI / Metal · Apple Silicon optimized · macOS 14+ Capture: Blackmagic DeckLink / AJA Kona / IoX3 Output: ProRes (Proxy / 422 / HQ / 4444), H.264, HEVC

Try it: v1.0 build (7-day free trial, no key required)

Thanks for fcp.cafe and CommandPost — both have been quiet companions for our part of the FCP world.

This looks super interesting!

You can download and learn more on the First Rush website.

Enjoy the rest of your weekend!

SpliceKit has now been downloaded 670 times! 🥳

SpliceKit v3.3.5 has the following changes:

🔨 Improvements:

- Adds the Siri-style command palette with voice dictation.

- Polished liquid-glass layout and single-selection handling.

- Immersive Blackmagic RAW preview support.

- Patcher diagnostics/Sentry runtime hardening.

SpliceKit v3.3.6 has the following changes:

🔨 Improvements:

- Adds Whisper large-v3 CoreML transcription for the social captions plugin (engine picker with Parakeet v3, Whisper large-v3-turbo, and Whisper large-v3; models download on first use).

- Adds Sentry Logs support.

🐞 Bug Fixes:

- Fixes caption positions resetting to center on FCP relaunch by running the persisted-caption repair headlessly when the panel isn't open.

SpliceKit v3.3.7 has the following changes:

🔨 Improvements:

- Continues the Apple Immersive/BRAW work-in-progress with safer immersive preview decoding, reduced-resolution playback handling, fisheye/equirect preview improvements, native viewer state hardening, and crash fixes for affected users.

You can follow along the adventure over on the FCP Cafe Discord.

You can download and learn more on the SpliceKit Website.

ATEM TO FCP v1.5.2 is out now:

- This update focuses on audio accuracy, multicam consistency, keyer correctness, and import stability in Final Cut Pro.

Audio:

- Fixed preroll: sessions starting with Color Generator or Media Player now include correct audio from frame one (PGM, MIC1, MIC2).

- AFV behavior aligned with ATEM: no audio under Color/Media Player if the source has none.

- Improved audio dissolves: fades now start at transition onset with proper fade-in/out, removing audible bumps.

- Separated extended audio lanes (-1 / -2) to avoid visual merging in FCP.

- RELINK + PGM: extended audio correctly references original ISO files with ATEM mix.

Multicam:

- All available audio angles are always included (ISO WAVS, MIC1, MIC2, PGM for RELINK). Mapping selects the active angle; others remain available for post.

Keyer:

- Fixed Flying Luma/Chroma Key: correct keyer filters (Luma / Green Screen) now applied independently from DVE transforms.

- Keyer filters now correctly applied to Media Player sources (not only camera ISOs).

Stability:

- Fixed FCP import error "IDREF references unknown ID" in sessions with only KEY/ USK transitions.

- Resolved "Syntax of value for attribute ref of filter-audio" by ensuring consistent transition effect allocation.

You can download and learn more on the Mac App Store.